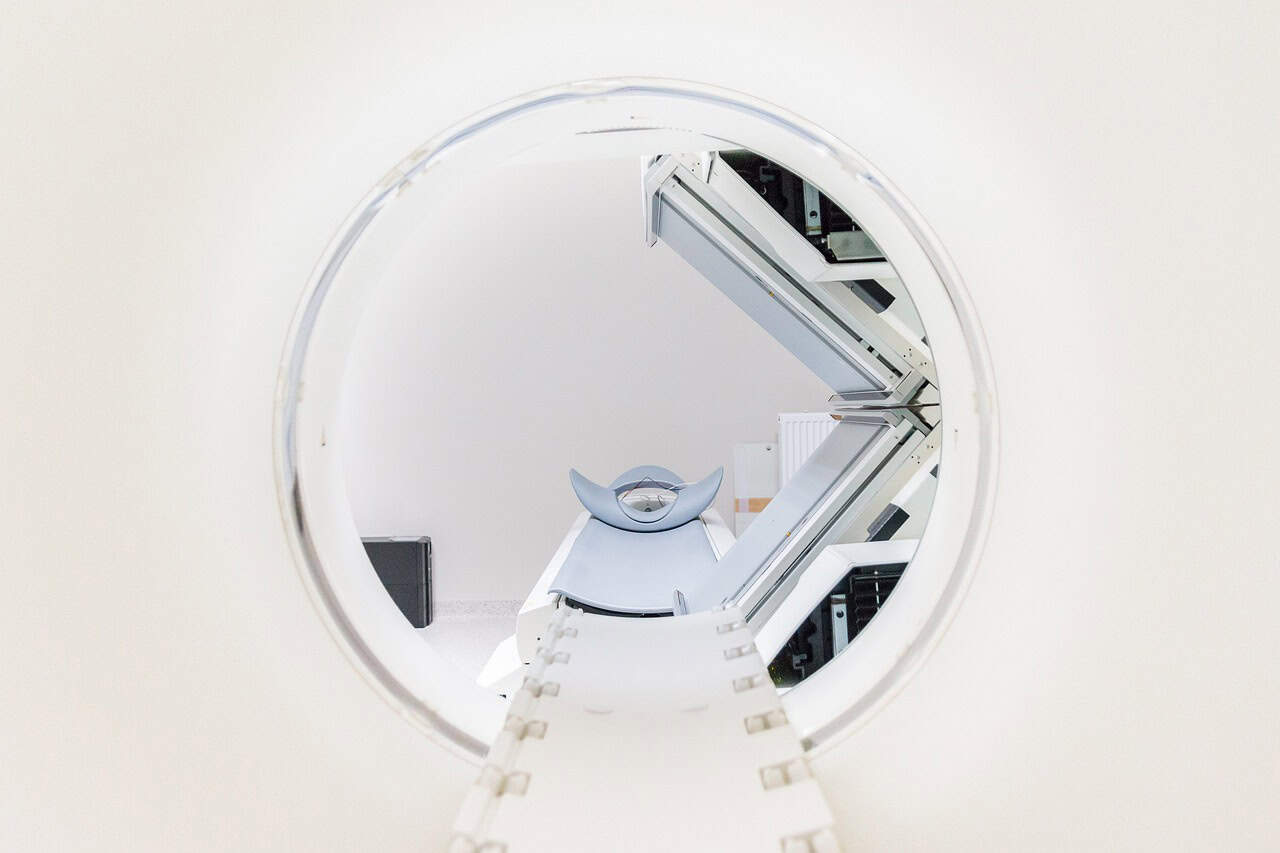

Medical imaging has long been a cornerstone of diagnosis and treatment planning, and artificial intelligence is reshaping how images move from scanners to clinical decisions. New software and models help speed tasks that once took teams of people many hours, letting clinicians focus on the trickier cases while routine work flows more smoothly.

The balance between automation and human oversight invites fresh questions about trust, training, and task assignment in everyday practice. The following sections look at five concrete ways AI alters the steps inside imaging services with practical examples and observed effects.

1. Faster Image Triage And Prioritization

Algorithms trained to recognize urgent findings can scan incoming exams and flag those that need prompt attention, cutting the time from scan to notification in emergency cases. Many radiology units report that automated triage reduces the time a patient with life threatening pathology waits for a specialist to review images, and that can make a measurable clinical difference.

These tools do not replace the radiologist but act as a first pass that points clinicians toward the sharp end of the queue. Over time the models learn what clinicians treat as urgent and adjust scoring so the system aligns with local practice.

Triage systems also reassess image order as new data appears, so a study that once seemed routine can rise in priority when subtle signs are detected on later series. That dynamic re ranking keeps human teams aware of shifting risk without forcing constant manual review of every file.

Teams report that reduced manual sorting frees staff for tasks that require pattern matching or patient communication. The net effect is a smoother flow from scanner to report and fewer near misses when time matters.

2. Automated Detection And Quantification Of Findings

Computer models can highlight nodules, fractures, bleeds, and other anomalies with bounding boxes or heat maps that make it easier to spot small targets in large image sets. Quantitative outputs such as lesion volumes, percent change over time, and density measures provide objective metrics that support follow up decisions and reduce variability in measurements.

When clinicians compare automated figures with human reads they often find a tighter agreement after a short period of mutual calibration. The routine nature of numeric outputs makes tracking disease progression faster and more transparent across care teams.

Beyond single lesion measures, AI can perform organ wide segmentation to create reproducible baselines for the heart, liver, lungs, and brain, allowing longitudinal tracking without tedious manual contouring. That reduces inter reader variability and helps studies stack up more cleanly for clinical trials or multi center registries.

As automated measures appear in reports more often, clinicians grow accustomed to correlating numbers with symptoms and therapy choices. The result is less guesswork when planning follow up intervals or assessing response to treatment.

3. Seamless Workflow Integration And Reporting Support

When AI tools plug directly into picture archiving and communication systems they can insert findings into the same worklist clinicians already use so the learning curve stays small. Integrated results can be presented as suggested text snippets that the radiologist edits, which speeds report generation while letting the clinician maintain final judgment.

These suggestion driven workflows reduce repetitive typing and standardize phrasing across teams, which helps downstream users such as referring physicians and coders. Over time templates and model outputs converge toward formats that match departmental habits and billing needs.

Reporting support can also include automated quality checks that catch omitted views, mismatched identifiers, or incomplete sequences before a report is finalized, lowering the incidence of preventable errors. These checks run quietly in the background and prompt the reader when an inconsistency might affect interpretation.

That kind of safety net blends well with human review because prompts are short and actionable, not intrusive. Teams often report fewer callbacks and smoother information flow when these safeguards are active.

4. Natural Language Processing For Faster Documentation

Natural language tools can extract key findings from radiology reports and convert them into structured data fields that feed registries, research projects, and clinical dashboards. That process transforms free text into searchable elements without forcing clinicians to change how they write, preserving natural style while unlocking analytic value.

For those interested in expanding their understanding, exploring more radiology articles worth reading can provide added perspective on how documentation tools evolve in practice.

Structured outputs also let care teams run queries across large report collections to spot trends or cluster similar cases for review. This lowers the barrier to retrospective study design and supports quality initiatives with less manual curation.

Speech to text and automated summarization reduce the burden of typing and revision for busy clinicians, and when paired with accuracy checks they can speed documentation without sacrificing clarity. The system can propose a short impression based on detected findings, and the radiologist can tailor the wording to match nuance or clinical context.

Many users describe a sense of regained time that they then invest in complex interpretations or teaching. Over time, the language model tunes itself to local phraseology so suggested text feels familiar rather than foreign.

5. Continuous Learning And Quality Monitoring

Modern imaging workflows can incorporate feedback loops that let models improve as clinicians correct outputs or add annotations, which keeps performance aligned with evolving practice. Those feedback channels support model versioning and controlled retraining so teams can track how changes affect both sensitivity and false positive rates.

Regular audits that compare model outputs with consensus reviews surface systematic biases or gaps in training data before those issues propagate. That attention to ongoing performance builds confidence and keeps automation from drifting away from clinical needs.

Quality monitoring also helps managers measure throughput, report turnaround times, and case complexity in ways that highlight bottlenecks and opportunities for staff training. Data driven dashboards fed by both model outputs and human reads show where delays cluster and which protocols produce the most repeat scans.

Armed with that information, teams can adjust scheduling, staff allocation, or protocol parameters to smooth flow. The end result is a more resilient service that learns from its own data and from clinician corrections without asking humans to shoulder all of the work.